Multimodal AI

What is Multimodal AI?

Author- SEO Content Writer

Artificial intelligence has spent most of its history operating in silos one model for text, another for images, another for audio. Multimodal AI breaks that boundary entirely. Multimodal AI is a type of artificial intelligence that can understand and process different types of information such as text, images, audio, and video all at the same time. Rather than analyzing a single data type in isolation, these systems combine inputs the way a human naturally would: seeing, hearing, and reading all at once to form a complete understanding.

This is no longer an emerging concept. The global multimodal AI market was valued at $1.73 billion in 2024 and is projected to reach $10.89 billion by 2030, growing at a CAGR of 36.8%. For businesses building with AI today, understanding multimodal artificial intelligence is not optional it is foundational.

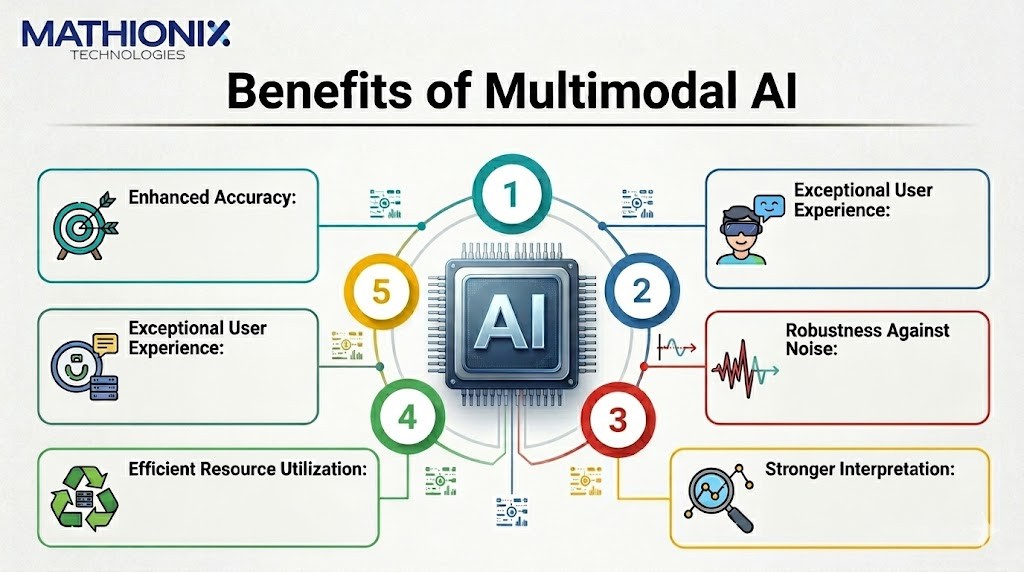

What Are the Benefits of Multimodal AI?

Multimodal AI does not just do more it does better. By pulling from multiple data streams simultaneously, these systems produce outputs that are more accurate, more intuitive, and far more useful in real-world conditions. Here is what that looks like in practice.

1. Enhanced Accuracy

Multimodal AI models combine information from various sources and analyze and interpret it simultaneously across multiple modalities, giving the model a broader, more well-rounded, and comprehensive understanding of each data type and its context and connections. For example, a model reviewing a medical image alongside a patient’s written symptoms will produce a more precise diagnosis than one working from either input alone.

2. Exceptional User Experience

Because multimodal gen AI models can process multisensory inputs, users are able to interact with them by speaking, gesturing, or using augmented or virtual reality controllers making technology more accessible to nontechnical users and expanding who can benefit from AI. This shift from text-only interfaces to natural, human-like interaction directly improves how real users engage with AI-powered products.

3. Robustness Against Noise

Real-world data is never clean. Multimodal AI can resolve problems with missing or noisy data and fill the gaps, resulting in an ability to understand things in a more complete way. When one data channel is degraded or incomplete, the model compensates using signals from other modalities something no single-input system can do.

4. Efficient Resource Utilization

AI models in general are becoming less expensive and more powerful with each passing month researchers at Sony AI recently demonstrated that a model that cost $100,000 to train in 2022 can now be trained for less than $2,000. Multimodal architectures benefit from this cost compression too, allowing businesses to deploy capable systems without the prohibitive upfront investment they once required.

5. Stronger Interpretation

Multimodal AI does not just see images it reads, hears, and understands, allowing it to give powerful and intelligent analysis useful for tasks like image captioning, visual question answering, scene understanding, content moderation, medical imaging analysis, and anomaly detection. This depth of interpretation is what separates it from conventional computer vision or NLP tools.

Generative AI vs. Multimodal AI: What's the Difference?

People often use these terms interchangeably, but they describe different things. Generative AI refers to AI systems that create new content text, images, code, audio based on a prompt. Multimodal AI refers to AI systems that can process and understand more than one type of data input.

The important point is that these categories overlap. The generative multimodal AI market was valued at $740.1 million in 2024, driven by high-quality content creation across video, text, and audio. A model like GPT-4o is both generative and multimodal it generates outputs while also accepting image, text, and audio as inputs. However, not all generative AI is multimodal (GPT-3 only handled text), and not all multimodal AI is generative (a surveillance system processing video and sensor data produces alerts, not creative content).

For businesses working with Mathionix on AI development, understanding this distinction matters when scoping a project. If you need content creation, generative AI is your driver. If you need richer input understanding documents, images, voice multimodal capabilities are what make the difference.

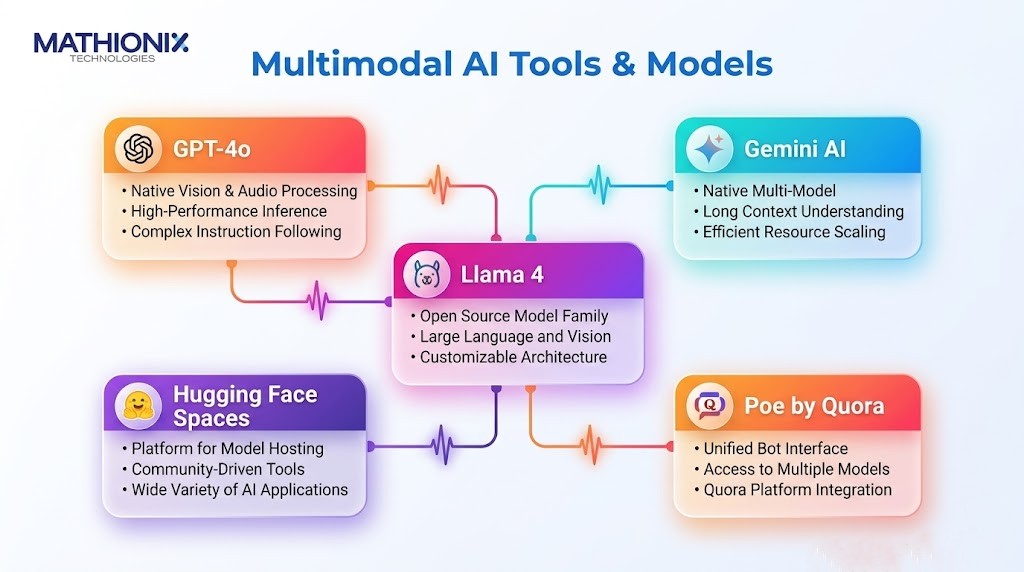

Best Multimodal AI Tools & Models in 2026

The multimodal AI landscape has matured quickly. Below are the most impactful models and tools available right now, covering both developer-grade models and accessible no-code options.

1. GPT-4o

OpenAI’s GPT-4o (“o” for Omni) processes text, images, and audio natively within a single neural network. It responds to voice inputs instantly, often in under 300 milliseconds, making it a strong fit for voice-based chatbots and virtual assistants in enterprise applications. GPT-4o leads MMLU Multidisciplinary Multi-task Language Understanding with 88.7% accuracy, showcasing its breadth of training.

2. Gemini AI

Google’s Gemini lineup has emerged as a formidable multimodal contender. With a context window of up to 1 million tokens (expanding to 2 million), Gemini 2.5 far surpasses GPT-4o’s 128K-token limit, enabling it to process full documents, codebases, and lengthy research papers in a single prompt. Gemini 2.5 Pro excels in visual reasoning tasks with 79.6% accuracy on specialized benchmarks.

3. Llama 4

In October 2025, Meta unveiled Llama 4 Scout and Llama 4 Maverick multimodal systems that can process and translate a wide range of data formats including text, video, images, and audio, marking a significant leap in AI’s ability to understand and interact with the world. Being open-weight, Llama 4 is particularly valuable for organizations that need to run multimodal models on their own infrastructure.

4. Hugging Face Spaces

For teams without deep technical resources, Hugging Face Spaces offers a no-code environment where users can deploy and test multimodal AI models including image captioning, visual question answering, and audio transcription directly through a browser interface, without writing a single line of code. It has become one of the most widely used platforms for rapid multimodal AI prototyping.

5. Poe by Quora

Poe gives non-technical users access to multiple multimodal AI models including GPT-4o and Claude through a single, unified chat interface. Users can upload images, ask follow-up questions, and switch between models without any setup. For small businesses exploring multimodal AI capabilities before committing to a full AI development engagement, Poe offers an accessible entry point.

GPT-4o vs Gemini: Which Is Better for Your Business?

There is no universal answer the right choice depends entirely on your use case and infrastructure. Here is what the data shows.

Gemini gives you scale and multimodality, while GPT gives you precision and workflow power. If your team is already embedded in Google Workspace and your work involves processing large documents, research files, or visual data at scale, Gemini’s massive context window and native Google integrations make it the stronger choice. Gemini integrates deeply with Google Workspace Docs, Sheets, Gmail allowing it to interact with and manipulate live documents.

On the other hand, if your priority is consistent writing quality, structured reasoning, real-time voice interaction, or building customer-facing AI applications, GPT-4o delivers. GPT-4o understands both text and voice natively, making it a great fit for voice-based chatbots or virtual assistants in enterprise applications.

From a cost standpoint, GPT-4.1 input is priced at $2 per million tokens, while Gemini 2.5 Pro starts at $1.25 per million tokens for shorter contexts making Gemini more economical for high-volume processing workloads.

The practical answer for most businesses in 2026: test both. Many enterprise teams are now running multi-model strategies, using Gemini for research and document analysis while relying on GPT-4o for conversational and customer-facing applications.

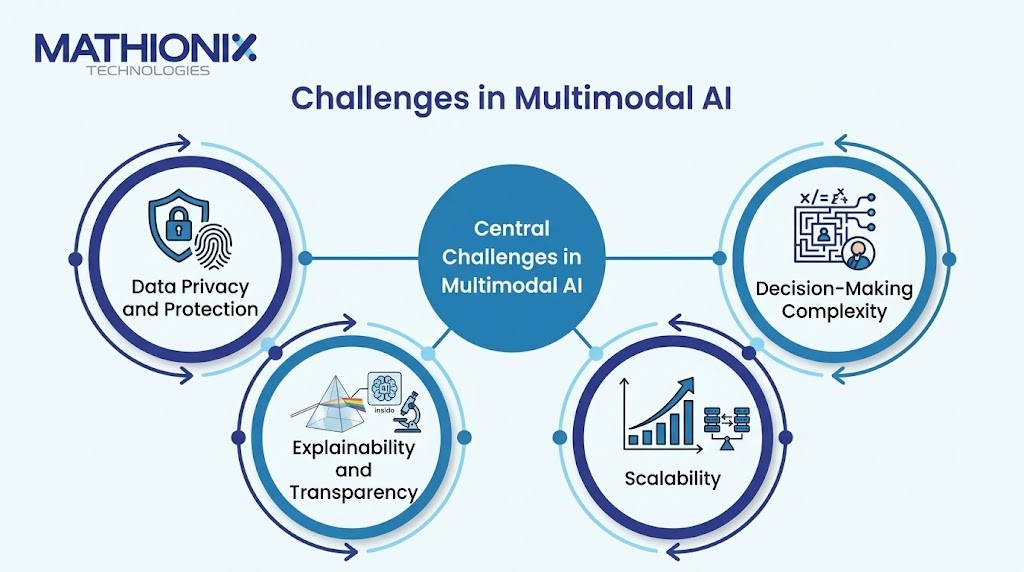

Key Challenges in Multimodal AI

Multimodal artificial intelligence delivers real power, but responsible AI development means acknowledging where the complexity lives.

1. Data Privacy and Protection

Because multimodal AI involves diverse inputs text, images, audio, and video maintaining consistent data quality is key, and privacy concerns are equally critical because multimodal data can reveal unintended patterns. A system processing employee voice recordings alongside HR documents, for instance, carries privacy obligations that text-only systems do not.

2. Explainability and Transparency

Most multimodal AI systems operate as black boxes. When a model fuses signals from an image, a document, and an audio clip to reach a conclusion, tracing exactly why it produced that output is genuinely difficult. For regulated industries finance, healthcare, legal this lack of explainability is a significant barrier to adoption.

3. Scalability

Key challenges in multimodal AI include data integration, scalability, missing or noisy data, and interpretability. Scaling a multimodal system to handle millions of inputs across several modalities simultaneously requires robust infrastructure, efficient model architectures, and careful engineering costs that can surprise organizations that underestimate multimodal complexity upfront.

4. Decision-Making Complexity

When multiple data modalities disagree for instance, an image suggests one thing while accompanying text suggests another the model must resolve that conflict. Poor fusion architectures can amplify uncertainty rather than reduce it, leading to less reliable outputs than a simpler, single-modality model might have produced.

Businesses can mitigate these risks by starting with well-scoped use cases, using open-source models where data sovereignty matters, investing in responsible AI practices from the beginning, and partnering with experienced AI development teams that understand both the promise and the pitfalls of multimodal systems.

Future of Multimodal AI

The trajectory of multimodal AI points in one direction: deeper integration into the fabric of how businesses operate. The multimodal AI market is estimated to grow at a CAGR of 32.7% from 2025 to 2034, with future advancement focused on real-time edge AI applications and human-AI collaboration.

We are moving toward AI systems that can reason across modalities in real time watching a factory floor via camera, reading sensor data, and listening to operator voice inputs simultaneously to predict equipment failure before it happens. In healthcare, life sciences companies are already using multimodal AI to transform drug discovery and clinical care delivery, with foundation models now able to predict a protein’s 3D molecular structure in just a couple of minutes a process that once took months.

For companies building products today, the window to integrate multimodal AI as a competitive differentiator is still open but it is closing. The organizations that start building multimodal capabilities now will find themselves years ahead of those that wait.

Conclusion

Multimodal AI is redefining how intelligent systems understand the world by combining text, images, audio, and video into a single, unified experience. Businesses that adopt this technology now gain a significant competitive edge in automation, analytics, and customer engagement.

Ready to build intelligent, multimodal AI solutions? Mathionix Technologies delivers scalable AI development tailored to your business goals. Get in touch with Mathionix Technologies to start building smarter AI-powered products today

Grow Your Business with Custom AI Development Solution by Mathionix

Related Articles for You

Frequently Asked Questions

What is multimodal AI?

Multimodal AI refers to artificial intelligence systems that can process and understand multiple types of data simultaneously including text, images, audio, and video rather than handling only one data type at a time.

How is multimodal AI different from traditional AI?

Traditional or unimodal AI systems are trained on a single data type. A language model handles only text; an image classifier handles only images. Multimodal AI fuses several data streams at once, enabling richer understanding and more accurate outputs across complex, real-world scenarios.

What are the most popular multimodal AI models?

As of 2026, the leading multimodal AI models are GPT-4o by OpenAI, Gemini 2.5 by Google, and Llama 4 by Meta. Each offers different strengths in terms of context window size, processing speed, cost, and integration capabilities.

Is multimodal AI expensive for small businesses?

Not necessarily. Gemini 2.5 Pro offers free access with rate limits and no-code platforms like Hugging Face Spaces and Poe allow smaller teams to experiment with multimodal capabilities without any API costs. For custom AI development, the investment scales with scope and entry-level deployments are far more affordable today than they were even two years ago.